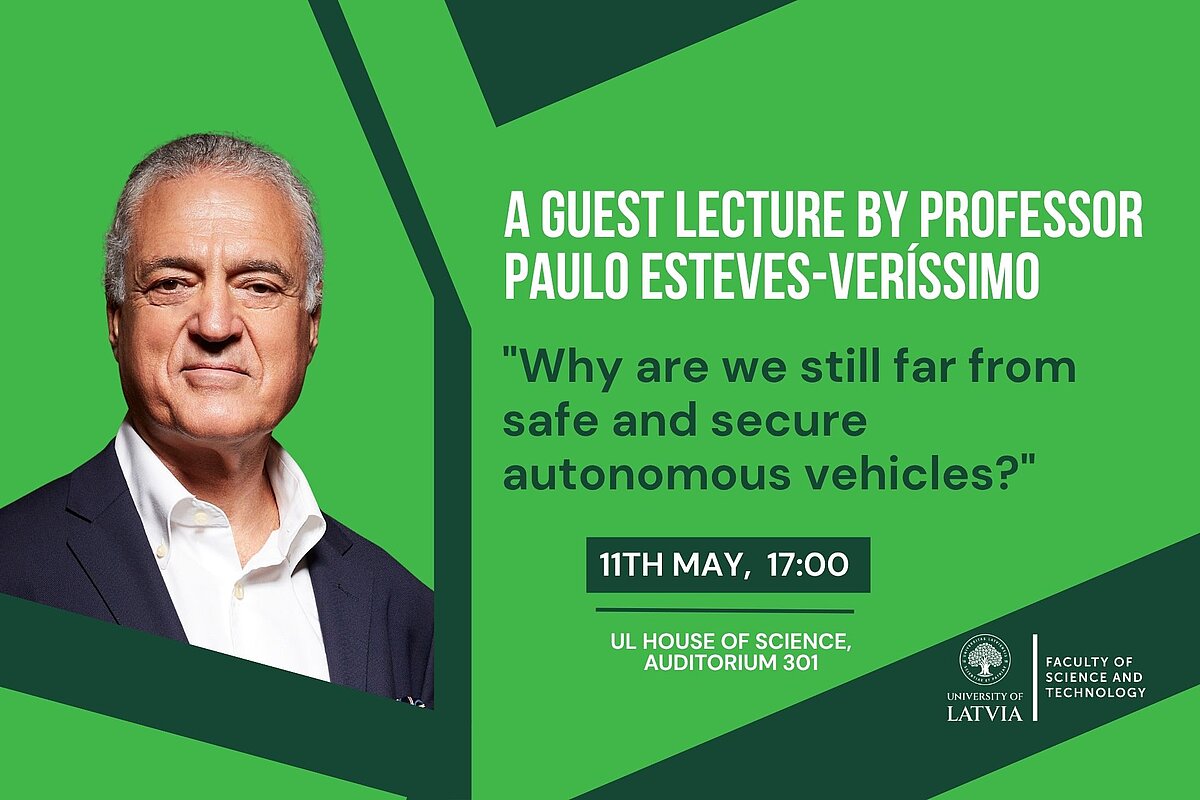

Paulo Esteves-Verissimo is a former Professor and Director of RC3 Center for Resilient Computing and Cybersecurity at KAUST U. (KSA), and currently a Research Fellow at the Univ. of Lisbon. Previously, he was: full professor, FNR PEARL Chair and Head of CritiX lab at the U. of Luxembourg; professor and Director of LASIGE center at U. of Lisbon; and professor at T.U. Lisbon (PT). He was also an adjunct professor at Carnegie Mellon U. (USA). In his talk, he will explore why autonomous vehicles are still far from being fully safe and secure, despite rapid technological progress. The presentation will highlight both the advancements and the key challenges, particularly those related to the use of Artificial Intelligence and Machine Learning (AI/ML).

Paulo Esteves-Verissimo says: “Four years ago, I was giving a talk with a similar title, about the pitfalls and enablers of Autonomous Vehicles (AV). Time ran very fast indeed. Some new enablers appeared, we did not solve most of the pitfalls and … those new enablers (AI/ML) brought even more pitfalls. From (2022): «… AVs, though using extensive fault-tolerance e.g., in x-by-wire functions, are still not quite safe from an accidental faults perspective.» and «… present an even greater threat surface, when accidental faults are combined to malicious attacks.» To (2026): The attracting functional power of current AV architectures must be put in context with an equally significant number of related serious or fatal accidents. Why is that? These pitfalls have been very slowly recognized by car makers, with potentially harming results. To make matters worse, simultaneously ensuring properties of Safety and Security is a hard problem. I cut through this state of play by identifying important misconceptions and pitfalls originating from the use of inappropriate AI/ML techniques in the AV area, which may be the cause of serious accidents, however useful they may be. Then, I rise a bit of the curtain on how to break this chicken and egg dilemma, presenting some solution avenues based on Cyber Resilience, a core subject of my research. For example, by reconciling the data-level stochastic nature of AI/ML paradigms with the determinism of driving control theory at system-level, leveraging the best from both worlds: trustworthiness and intelligence.”